This post is a part of the DP-600: Implementing Analytics Solutions Using Microsoft Fabric Exam Prep Hub; and this topic falls under these sections:

Prepare data

--> Transform data

--> Identify and resolve duplicate data, missing data, or null values

Ensuring data quality is foundational for reliable analytics. Duplicate records, missing values, and nulls can lead to inaccurate aggregations, misleading insights, and broken joins. Microsoft Fabric provides multiple tools and techniques to identify, investigate, and resolve these issues during data preparation.

Why Data Quality Matters

Poor data quality can cause:

- Incorrect business metrics (e.g., inflated counts)

- Failed joins or mismatches

- Incorrect aggregates or KPIs

- Discrepancies across reports

The DP-600 exam expects you to know how to detect and fix these issues using Fabric’s transformation tools — without degrading performance or losing important data.

Key Data Quality Issues

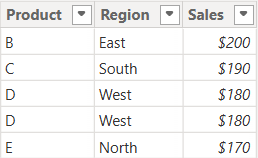

1. Duplicate Data

Duplicates occur when the same record appears multiple times.

Common causes:

- Repeated ingestion jobs

- Incorrect joins

- Source system errors

Impact of duplicates:

- Inflated metrics

- Misleading counts

- Distorted analytics

2. Missing Data

Missing data refers to complete absence of expected rows for certain categories or time periods.

Examples:

- No sales records for a specific store in a date range

- Missing customer segments

Impact:

- Bias in analysis

- Understated performance

3. Null Values

Nulls represent unknown or undefined values in a dataset.

Common cases:

- Missing customer name

- Missing numeric values

- Unpopulated fields in incomplete records

Consequences:

- SQL functions may ignore nulls

- Aggregations may be skewed

- Joins may fail or produce incorrect results

Tools and Techniques in Microsoft Fabric

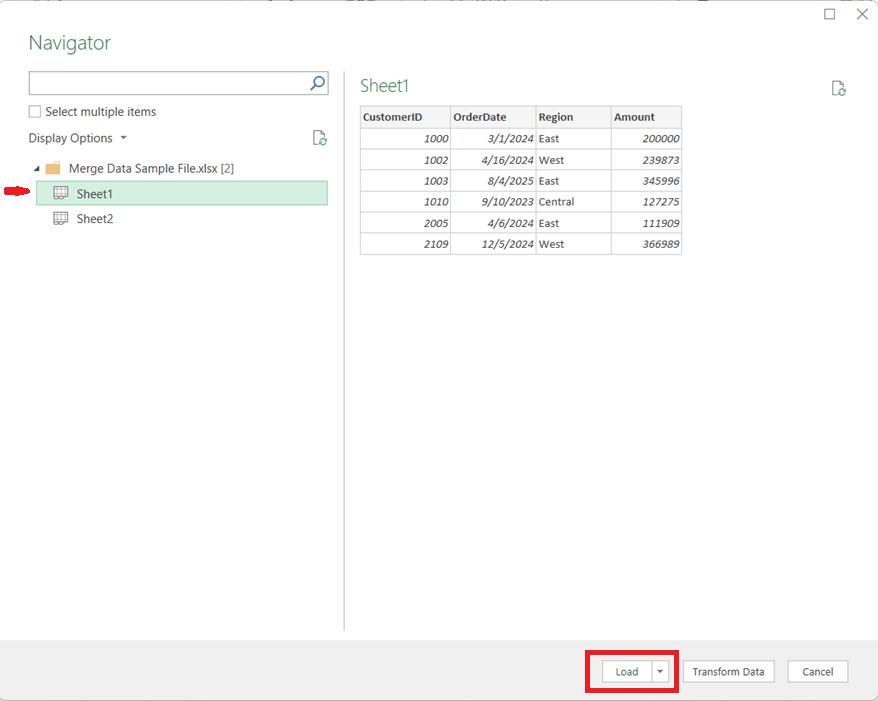

1. Power Query (Dataflows Gen2 / Lakehouse)

Power Query provides a visual and programmatic interface to clean data:

- Remove duplicates:

Home → Remove Rows → Remove Duplicates - Replace or fill nulls:

Transform → Replace Values

Or use Fill Up / Fill Down - Filter nulls:

Filter rows where column is null or not null

Benefits:

- No-code/low-code

- Reusable transformation steps

- Easy preview and validation

2. SQL (Warehouses / Lakehouse SQL Analytics)

Using SQL, you can identify and fix issues:

Detect duplicates:

SELECT Col1, Col2, COUNT(*) AS Cnt

FROM table

GROUP BY Col1, Col2

HAVING COUNT(*) > 1;Remove duplicates (example pattern):

WITH RankedRows AS (

SELECT *, ROW_NUMBER() OVER (PARTITION BY keycol ORDER BY keycol) AS rn

FROM table

)SELECT * FROM RankedRows WHERE rn = 1;Replace nulls:

SELECT COALESCE(column, 0) AS column_fixed

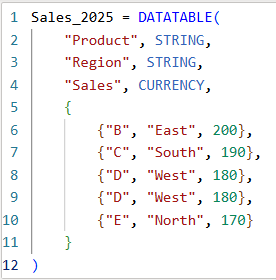

FROM table;3. Spark (Lakehouses via Notebooks)

Identify nulls:

df.filter(df["column"].isNull()).show()Drop duplicates:

df.dropDuplicates(["keycol"])Fill nulls:

df.na.fill({"column": "Unknown"})

Best Practices for Resolution

Addressing Duplicates

- Use business keys (unique identifiers) to define duplicates

- Validate whether duplicates are true duplicates or legitimate repeats

- Document deduplication logic

Handling Nulls

- Use domain knowledge to decide substitute values

- Zero for numeric

- “Unknown” or “Not Provided” for text

- Preserve nulls when they carry meaning (e.g., missing responses)

Handling Missing Data

- Understand the business meaning

- Is absence valid?

- Should data be imputed?

- Or should missing rows be generated via reference tables?

Data Profiling

- Use profiling to understand distributions and quality:

- Column completeness

- Unique value distribution

- Null frequency

Data profiling helps you decide which cleaning steps are required.

When to Clean Data in Fabric

Data quality transformations should be performed:

- Early in the pipeline (at the ingestion or transformation layer)

- Before building semantic models

- Before aggregations or joins

- Before publishing curated datasets

Early cleaning prevents issues from propagating into semantic models and reports.

Exam Scenarios

In DP-600 exam questions, you might see scenarios like:

- Metrics appear inflated due to duplicate records

- Reports show missing date ranges

- Joins fail due to null key values

- Aggregations ignore null values

Your job is to choose the correct transformation action — e.g., filtering nulls, deduplicating, replacing values, or imputing missing data — and the best tool (Power Query vs SQL vs Spark).

Key Takeaways

- Duplicate rows inflate counts and distort analytics.

- Missing rows can bias time-series or segment analysis.

- Null values can break joins and cause incorrect aggregation results.

- Effective resolution relies on understanding business context and using the right Fabric tools.

- Clean data early for better downstream performance and governance.

Final Exam Tip

If a metric doesn’t look right, think data quality first — missing or null values and duplicates are one of the most common real-world issues covered in DP-600 scenarios.

Practice Questions:

Here are 10 questions to test and help solidify your learning and knowledge. As you review these and other questions in your preparation, make sure to …

- Identifying and understand why an option is correct (or incorrect) — not just which one

- Look for and understand the usage scenario of keywords in exam questions to guide you

- Expect scenario-based questions rather than direct definitions

General Exam Tips for this section

If something looks wrong in a report:

- Too high? → Check for duplicates

- Blank or missing? → Check for nulls or missing rows

- Not joining correctly? → Check nulls and key integrity

Question 1

Which issue is most likely to cause inflated totals in aggregated metrics?

A. Null values in numeric columns

B. Missing rows for a time period

C. Duplicate records

D. Incorrect column data types

✅ Correct Answer: C

Explanation:

Duplicate records result in the same data being counted more than once, which inflates sums, counts, and averages.

Question 2

In Power Query, which action is used to remove duplicate rows?

A. Filter Rows

B. Group By

C. Remove Duplicates

D. Replace Values

✅ Correct Answer: C

Explanation:

The Remove Duplicates operation removes repeated rows based on selected columns.

Question 3

Which SQL function is commonly used to replace null values with a default value?

A. NULLIF

B. ISNULL or COALESCE

C. COUNT

D. CAST

✅ Correct Answer: B

Explanation:

ISNULL() and COALESCE() return a specified value when a column contains NULL.

Question 4

Why can null values cause problems in joins?

A. Nulls increase query runtime

B. Nulls are treated as zero

C. Nulls never match other values

D. Nulls are automatically filtered

✅ Correct Answer: C

Explanation:

NULL values do not match any value (including other NULLs), which can cause rows to be excluded from join results.

Question 5

Which scenario best justifies keeping null values rather than replacing them?

A. The column is used in joins

B. The null indicates “unknown” or “not applicable”

C. The column is numeric

D. The column has duplicates

✅ Correct Answer: B

Explanation:

Nulls may carry important business meaning and should be preserved when they accurately represent missing or unknown information.

Question 6

Which Fabric tool is most appropriate for visual data profiling to identify missing and null values?

A. Power BI visuals

B. Power Query

C. Semantic models

D. Eventhouse

✅ Correct Answer: B

Explanation:

Power Query provides built-in data profiling features such as column distribution, column quality, and column profile.

Question 7

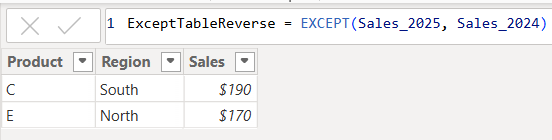

What is the purpose of using an anti join when checking data quality?

A. To merge tables

B. To append data

C. To identify unmatched records

D. To replace null values

✅ Correct Answer: C

Explanation:

Anti joins return rows that do not have a match in another table, making them ideal for identifying missing or orphaned records.

Question 8

Which approach is considered a best practice for handling data quality issues?

A. Fix issues only in reports

B. Clean data as late as possible

C. Resolve issues early in the pipeline

D. Ignore null values

✅ Correct Answer: C

Explanation:

Resolving data quality issues early prevents them from propagating into semantic models and reports.

Question 9

Which Spark operation removes duplicate rows from a DataFrame?

A. filter()

B. groupBy()

C. dropDuplicates()

D. distinctColumns()

✅ Correct Answer: C

Explanation:

dropDuplicates() removes duplicate rows based on one or more columns.

Question 10

A report is missing values for several dates. What is the most likely cause?

A. Duplicate rows

B. Incorrect aggregation logic

C. Missing source data

D. Incorrect data type conversion

✅ Correct Answer: C

Explanation:

Missing dates usually indicate that source records are absent rather than null or duplicated.