This post is a part of the DP-600: Implementing Analytics Solutions Using Microsoft Fabric Exam Prep Hub; and this topic falls under these sections:

Maintain a data analytics solution

--> Implement security and governance

--> Implement item-level access controls

To Do:

Complete the related module for this topic in the Microsoft Learn course: Secure data access in Microsoft Fabric

Item-level access controls in Microsoft Fabric determine who can access or interact with specific items inside a workspace, rather than the entire workspace. Items include reports, semantic models, Lakehouses, Warehouses, notebooks, pipelines, dashboards, and other Fabric artifacts.

For the DP-600 exam, it’s important to understand how item-level permissions differ from workspace roles, when to use them, and how they interact with data-level security such as RLS.

What Are Item-Level Access Controls?

Item-level access controls:

- Apply to individual Fabric items

- Are more granular than workspace-level roles

- Allow selective sharing without granting broad workspace access

They are commonly used when:

- Users need access to one report or dataset, not the whole workspace

- Consumers should view content without seeing development artifacts

- External or business users need limited access

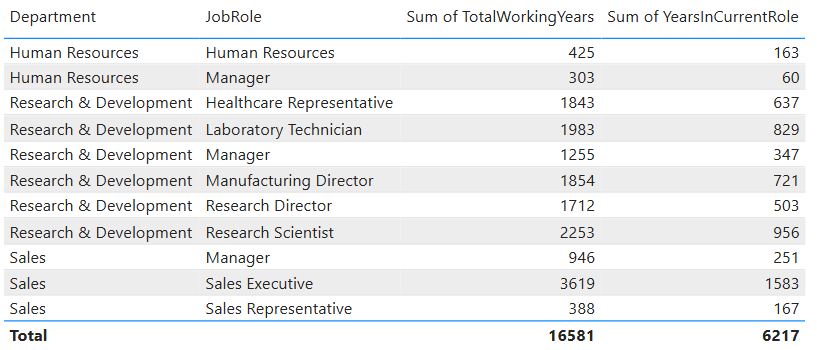

Common Items That Support Item-Level Permissions

In Microsoft Fabric, item-level permissions can be applied to:

- Power BI reports

- Semantic models (datasets)

- Dashboards

- Lakehouses and Warehouses

- Notebooks and pipelines (via workspace + item context)

The most frequently tested scenarios in DP-600 involve reports and semantic models.

Sharing Reports and Dashboards

Report Sharing

Reports can be shared directly with users or groups.

When you share a report:

- Users can be granted View or Reshare permissions

- The report appears in the recipient’s “Shared with me” section

- Access does not automatically grant workspace access

Exam considerations

- Sharing a report does not grant edit permissions

- Sharing does not bypass data-level security (RLS still applies)

- Users must also have access to the underlying semantic model

Semantic Model (Dataset) Permissions

Semantic models support explicit permissions that control how users interact with data.

Common permissions include:

- Read – View and query the model

- Build – Create reports using the model

- Write – Modify the model (typically for owners)

- Reshare – Share the model with others

Typical use cases

- Allow analysts to build their own reports (Build permission)

- Allow consumers to view reports without building new ones

- Restrict direct querying of datasets

Exam tips

- Build permission is required for “Analyze in Excel” and report creation

- RLS and OLS are enforced at the semantic model level

- Dataset permissions can be granted independently of report sharing

Item-Level Access vs Workspace-Level Roles

Understanding this distinction is critical for the exam.

| Feature | Workspace-Level Access | Item-Level Access |

| Scope | Entire workspace | Single item |

| Typical roles | Admin, Member, Contributor, Viewer | View, Build, Reshare |

| Best for | Team collaboration | Targeted sharing |

| Granularity | Coarse | Fine-grained |

Key exam insight:

Item-level access does not override workspace permissions. A user cannot edit an item if their workspace role is Viewer, even if the item is shared.

Interaction with Data-Level Security

Item-level access works together with:

- Row-Level Security (RLS)

- Column-Level Security (CLS)

- Object-Level Security (OLS)

Important behaviors:

- Sharing a report does not expose restricted rows or columns

- RLS is evaluated based on the user’s identity

- Item access only determines whether a user can query the item, not what data they see

Common Exam Scenarios

You may encounter questions such as:

- A user can see a report but cannot build a new one → missing Build permission

- A user has report access but sees no data → likely RLS

- A business user needs access to one report only → item-level sharing, not workspace access

- An analyst can’t query a dataset in Excel → lacks Build permission

Best Practices to Remember

- Use item-level access for consumers and ad-hoc sharing

- Use workspace roles for development teams

- Assign permissions to Entra ID security groups when possible

- Always pair item access with appropriate semantic model permissions

Key Exam Takeaways

- Item-level access controls provide fine-grained security

- Reports and semantic models are the most tested items

- Build permission is critical for self-service analytics

- Item-level access complements, but does not replace, workspace roles

Exam Tips

- Think “Can they see the object at all?”

- Combine:

- Workspace roles → broad access

- Item-level access → fine-grained control

- RLS/CLS → data-level restrictions

- Expect scenarios involving:

- Preventing access to lakehouses

- Separating authors from consumers

- Protecting production assets

- If a question asks who can view or build from a specific report or dataset without granting workspace access, the correct answer almost always involves item-level access controls.

Practice Questions:

Question 1 (Single choice)

What is the PRIMARY purpose of item-level access controls in Microsoft Fabric?

A. Control which rows a user can see

B. Control which columns a user can see

C. Control access to specific workspace items

D. Control DAX query execution speed

Correct Answer: C

Explanation:

- Item-level access controls determine who can access specific items (lakehouses, warehouses, semantic models, notebooks, reports).

- Row-level and column-level security are semantic model features, not item-level controls.

Question 2 (Scenario-based)

A user should be able to view reports but must NOT access the underlying lakehouse or semantic model. Which control should you use?

A. Workspace Viewer role

B. Item-level permissions on the lakehouse and semantic model

C. Row-level security

D. Column-level security

Correct Answer: B

Explanation:

- Item-level access allows you to block direct access to specific items even when the user has workspace access.

- Viewer role alone may still expose certain metadata.

Question 3 (Multi-select)

Which Fabric items support item-level access control? (Select all that apply.)

A. Lakehouses

B. Warehouses

C. Semantic models

D. Power BI reports

Correct Answers: A, B, C, D

Explanation:

- Item-level access can be applied to most Fabric artifacts, including data storage, models, and reports.

- This allows fine-grained governance beyond workspace roles.

Question 4 (Scenario-based)

You want data engineers to manage a lakehouse, but analysts should only consume a semantic model built on top of it. What is the BEST approach?

A. Assign Analysts as Workspace Viewers

B. Deny item-level access to the lakehouse for Analysts

C. Use Row-Level Security only

D. Disable SQL endpoint access

Correct Answer: B

Explanation:

- Analysts can access the semantic model while being explicitly denied access to the lakehouse via item-level permissions.

- This is a common enterprise pattern in Fabric.

Question 5 (Single choice)

Which permission is required for a user to edit or manage an item at the item level?

A. Read

B. View

C. Write

D. Execute

Correct Answer: C

Explanation:

- Write permissions allow editing, updating, or managing an item.

- Read/View permissions are consumption-only.

Question 6 (Scenario-based)

A user can see a report but receives an error when trying to connect to its semantic model using Power BI Desktop. Why?

A. XMLA endpoint is disabled

B. They lack item-level permission on the semantic model

C. The dataset is in Direct Lake mode

D. The report uses DirectQuery

Correct Answer: B

Explanation:

- Viewing a report does not automatically grant access to the underlying semantic model.

- Item-level access must explicitly allow it.

Question 7 (Multi-select)

Which statements about workspace access vs item-level access are TRUE? (Select all that apply.)

A. Workspace access automatically grants access to all items

B. Item-level access can further restrict workspace permissions

C. Item-level access overrides Row-Level Security

D. Workspace roles are broader than item-level permissions

Correct Answers: B, D

Explanation:

- Workspace roles define baseline access.

- Item-level access can tighten restrictions on specific assets.

- RLS still applies within semantic models.

Question 8 (Scenario-based)

You want to prevent accidental modification of a production semantic model while still allowing users to query it. What should you do?

A. Assign Viewer role at the workspace level

B. Grant Read permission at the item level

C. Disable the SQL endpoint

D. Remove the semantic model

Correct Answer: B

Explanation:

- Read item-level permission allows querying and consumption without edit rights.

- This is safer than relying on workspace roles alone.

Question 9 (Single choice)

Which security layer is MOST appropriate for restricting access to entire objects rather than data within them?

A. Row-level security

B. Column-level security

C. Object-level security

D. Item-level access control

Correct Answer: D

Explanation:

- Item-level access controls whether a user can access an object at all.

- Object-level security applies inside semantic models.

Question 10 (Scenario-based)

A compliance requirement states that only approved users can access notebooks in a workspace. What is the BEST solution?

A. Place notebooks in a separate workspace

B. Apply item-level access controls to notebooks

C. Use Row-Level Security

D. Restrict workspace Viewer access

Correct Answer: B

Explanation:

- Item-level access allows targeted restriction without restructuring workspaces.

- This is the preferred Fabric governance approach.