This post is a part of the DP-600: Implementing Analytics Solutions Using Microsoft Fabric Exam Prep Hub; and this topic falls under these sections:

Prepare data

--> Transform data

--> Implement a star schema for a lakehouse or warehouse

Designing and implementing an effective schema is foundational to efficient analytics. In Microsoft Fabric, structuring your data into a star schema dramatically improves query performance, simplifies reporting, and aligns with best practices for BI workloads.

This article explains what a star schema is, why it matters in Fabric, and how to implement it in a lakehouse or data warehouse.

What Is a Star Schema?

A star schema is a relational modeling technique that organizes data into two primary types of tables:

- Fact tables: Contain measurable, quantitative data (metrics, transactions, events).

- Dimension tables: Contain descriptive attributes (e.g., customer info, product details, dates).

Star schemas get their name because the design resembles a star—a central fact table linked to multiple dimension tables.

Why Use a Star Schema?

A star schema offers multiple advantages for analytical workloads:

- Improved query performance: Queries are simplified and optimized due to straightforward joins.

- Simpler reporting: BI tools like Power BI map naturally to star schemas.

- Aggregations and drill-downs: Dimension tables support filtering and hierarchy reporting.

- Better scalability: Optimized for large datasets and parallel processing.

In Fabric, both lakehouses and warehouses support star schema implementations, depending on workload and user needs.

Core Components of a Star Schema

1. Fact Tables

Fact tables store the numeric measurements of business processes.

Common characteristics:

- Contains keys linking to dimensions

- Often large and wide

- Used for aggregations (SUM, COUNT, AVG, etc.)

Examples:

Sales transactions, inventory movement, website events

2. Dimension Tables

Dimension tables describe contextual attributes.

Common characteristics:

- Contain descriptive fields

- Usually smaller than fact tables

- Often used for filtering/grouping

Examples:

Customer, product, date, geography

Implementing a Star Schema in a Lakehouse

Lakehouses in Fabric support Delta format tables and both Spark SQL and T-SQL analytics endpoints.

Steps to Implement:

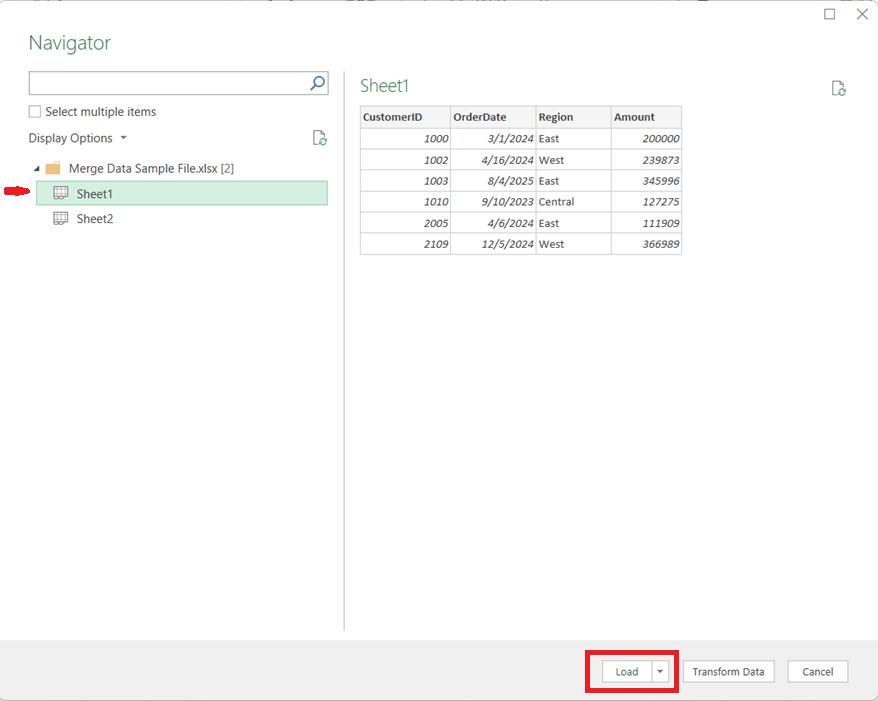

- Ingest raw data into your lakehouse (as files or staging tables).

- Transform data:

- Cleanse and conform fields

- Derive business keys

- Create dimension tables:

- Deduplicate

- Add descriptive attributes

- Create fact tables:

- Join transactional data to dimension keys

- Store numeric measures

- Optimize:

- Partition and Z-ORDER for performance

Tools You Might Use:

- Notebooks (PySpark)

- Lakehouse SQL

- Data pipelines

Exam Tip:

Lakehouses are ideal when you need flexibility, schema evolution, or combined batch + exploratory analytics.

Implementing a Star Schema in a Warehouse

Data warehouses in Fabric provide a SQL-optimized store designed for BI workloads.

Steps to Implement:

- Stage raw data in warehouse tables

- Build conforming dimension tables

- Build fact tables with proper keys

- Add constraints and indexes (as appropriate)

- Optimize with materialized views or aggregations

Warehouse advantages:

- Strong query performance for BI

- Native SQL analytics

- Excellent integration with Power BI and semantic models

Exam Tip:

Choose a warehouse when your priority is high-performance BI analytics with well-defined dimensional models.

Common Star Schema Patterns

Conformed Dimensions

- Dimensions shared across multiple fact tables

- Ensures consistent filtering and reporting across business processes

Slowly Changing Dimensions (SCD)

- Maintain historical attribute changes

- Types include Type 1 (overwrite) and Type 2 (versioning)

Fact Table Grain

- Define the “grain” (level of detail) clearly—for example, “one row per sales transaction.”

Star Schema and Power BI Semantic Models

Semantic models often sit on top of star schemas:

- Fact tables become measure containers

- Dimensions become filtering hierarchies

- Reduces DAX complexity

- Improves performance

Best Practice: Structure your lakehouse or warehouse into a star schema before building the semantic model.

Star Schema in Lakehouse vs Warehouse

| Feature | Lakehouse | Warehouse |

| Query engines | Spark & SQL | SQL only |

| Best for | Mixed workloads (big data + SQL) | BI & reporting |

| Optimization | Partition/Z-ORDER | Indexing and statistics |

| Tooling | Notebooks, pipelines | SQL scripts, BI artifacts |

| Schema complexity | Flexible | Rigid |

Governance and Performance Considerations

- Use consistent keys across facts and dimensions

- Validate referential integrity where possible

- Avoid wide, unindexed tables for BI queries

- Apply sensitivity labels on schemas for governance

- Document schema and business logic

What to Know for the DP-600 Exam

Be prepared to:

- Explain the purpose of star schema components

- Identify when to implement star schema in lakehouses vs warehouses

- Recognize patterns like conformed dimensions and SCDs

- Understand performance implications of schema design

- Relate star schema design to Power BI and semantic models

Final Exam Tip

If the question emphasizes high-performance reporting, simple joins, and predictable filtering, think star schema.

If it mentions big data exploration or flexible schema evolution, star schema in a lakehouse may be part of the answer.

Practice Questions:

Here are 10 questions to test and help solidify your learning and knowledge. As you review these and other questions in your preparation, make sure to …

- Identifying and understand why an option is correct (or incorrect) — not just which one

- Look for and understand the usage scenario of keywords in exam questions to guide you

- Expect scenario-based questions rather than direct definitions

1. What is the defining characteristic of a star schema?

A. Multiple fact tables connected through bridge tables

B. A central fact table connected directly to dimension tables

C. Fully normalized transactional tables

D. A schema optimized for OLTP workloads

Correct Answer: B

Explanation:

A star schema consists of a central fact table directly linked to surrounding dimension tables, forming a star-like structure optimized for analytics.

2. Which type of data is stored in a fact table?

A. Descriptive attributes such as names and categories

B. Hierarchical metadata for navigation

C. Quantitative, measurable values

D. User access permissions

Correct Answer: C

Explanation:

Fact tables store numeric measures (e.g., sales amount, quantity) that are aggregated during analytical queries.

3. Which table type is typically smaller and used for filtering and grouping?

A. Fact table

B. Dimension table

C. Bridge table

D. Staging table

Correct Answer: B

Explanation:

Dimension tables store descriptive attributes and are commonly used for filtering, grouping, and slicing fact data in reports.

4. Why are star schemas preferred for Power BI semantic models?

A. They eliminate the need for relationships

B. They align naturally with BI tools and optimize query performance

C. They reduce OneLake storage usage

D. They replace DAX calculations

Correct Answer: B

Explanation:

Power BI and other BI tools are optimized for star schemas, which simplify joins, reduce model complexity, and improve performance.

5. When implementing a star schema in a Fabric lakehouse, which storage format is typically used?

A. CSV

B. JSON

C. Parquet

D. Delta

Correct Answer: D

Explanation:

Fabric lakehouses store tables in Delta format, which supports ACID transactions and efficient analytical querying.

6. Which scenario most strongly suggests using a warehouse instead of a lakehouse for a star schema?

A. Schema evolution and exploratory data science

B. High-performance, SQL-based BI reporting

C. Streaming ingestion of real-time events

D. Semi-structured data exploration

Correct Answer: B

Explanation:

Fabric warehouses are optimized for SQL-based analytics and BI workloads, making them ideal for star schemas supporting reporting scenarios.

7. What does the “grain” of a fact table describe?

A. The number of dimensions in the table

B. The level of detail represented by each row

C. The size of the table in storage

D. The indexing strategy

Correct Answer: B

Explanation:

The grain defines the level of detail for each row in the fact table (e.g., one row per transaction or per day).

8. What is a conformed dimension?

A. A dimension used by only one fact table

B. A dimension that contains only numeric values

C. A shared dimension used consistently across multiple fact tables

D. A dimension generated dynamically at query time

Correct Answer: C

Explanation:

Conformed dimensions are shared across multiple fact tables, enabling consistent filtering and reporting across different business processes.

9. Which design choice improves performance when querying star schemas?

A. Highly normalized dimension tables

B. Complex many-to-many relationships

C. Simple joins between fact and dimension tables

D. Storing dimensions inside the fact table

Correct Answer: C

Explanation:

Star schemas minimize join complexity by using simple, direct relationships between facts and dimensions, improving query performance.

10. Which statement best describes how star schemas fit into the Fabric analytics lifecycle?

A. They replace semantic models entirely

B. They are used only for real-time analytics

C. They provide an analytics-ready structure for reporting and modeling

D. They are required only for data ingestion

Correct Answer: C

Explanation:

Star schemas organize data into an analytics-ready structure that supports semantic models, reporting, and scalable BI workloads.